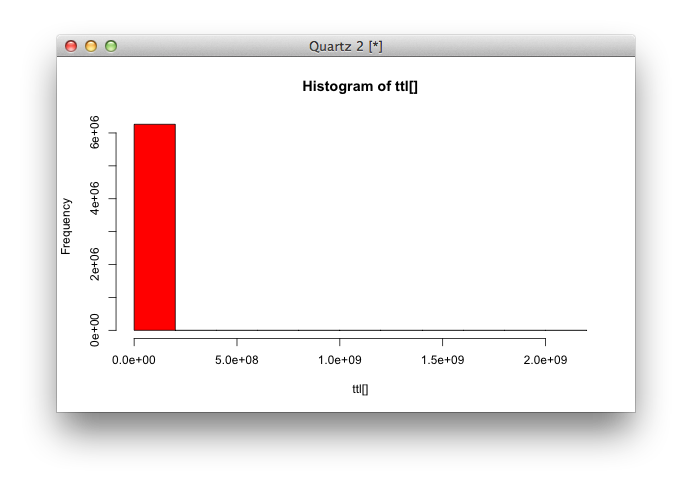

I recently sampled 348,876,495 valid (actual records exist) A queries processed by OpenDNS servers. This represents 6,263,672 unique names.

And I then made a simple app that dumps the initial TTL (as reported by authoritative servers) for each of these unique names, in order to check what the TTL distribution looks like. This data was then processed with R.

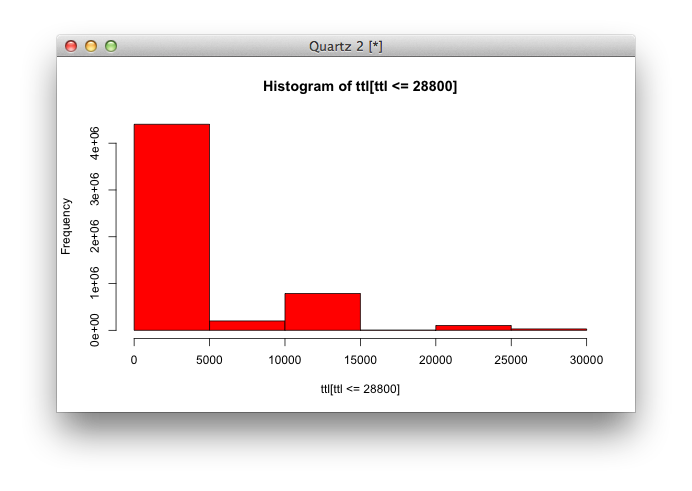

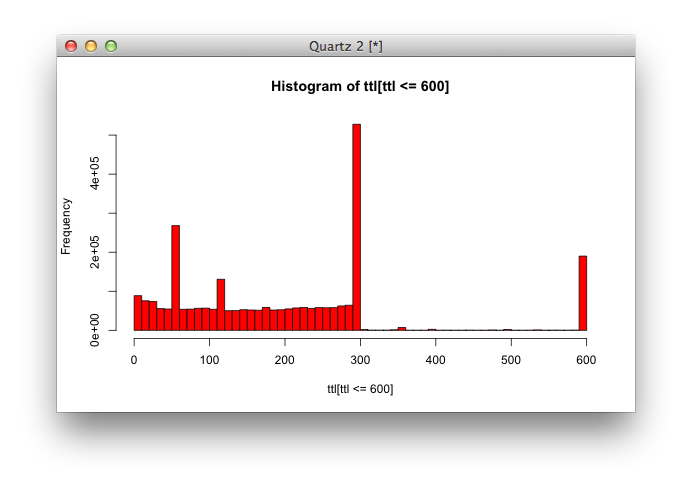

Well, the TTL distribution looks very unbalanced, to say the least.

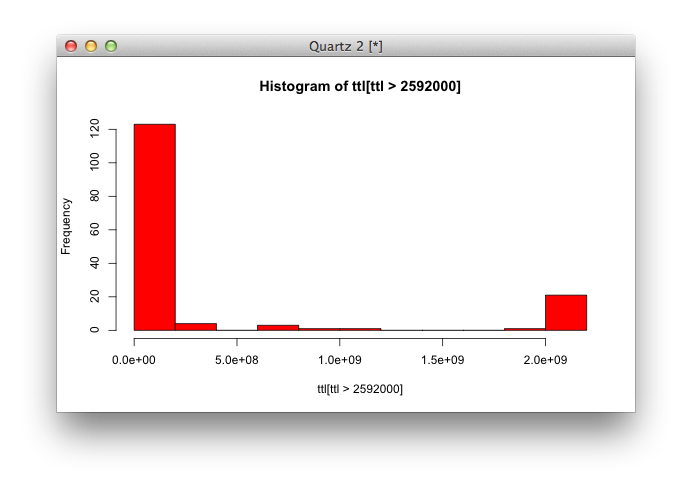

Do a lot of people publish records with a TTL that is longer than 30 days?

No, apparently, only a ridiculous amount of records happen to have a TTL larger than 30 days.

It’s fun to see insane TTLs like 68 years (bbs.oouc.cn, beechglen.com, canadianangling.com, capitoltalk.com, durhambannerexchange.com, elimport.co.il, epictn.org, glutathioneforhealth.com, greeley.ca, gregsushinsky.com, …), although these are probably configuration errors.

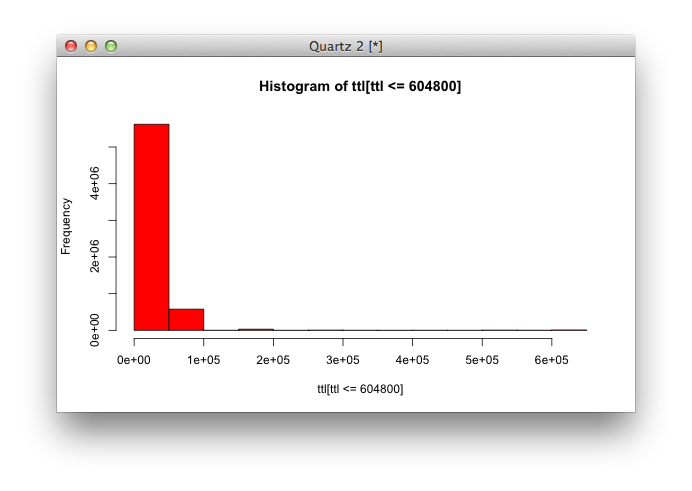

Let’s zoom into more reasonnable TTLs, that are 1 week or less.

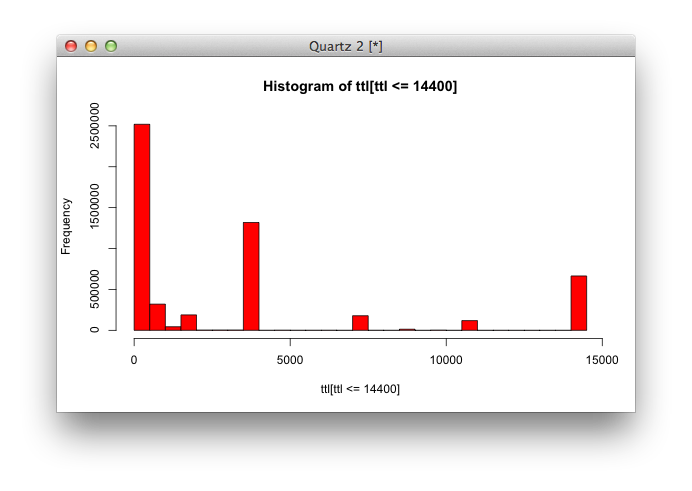

Wow. Apparently, even TTLs longer than 1 day are very rare. So let’s shrink the window to TTLs that are no longer than 1 day.

A fair amount of records have been configured with a TTL that is exactly 1 day, but the vast majority seems to be below 4 hours.

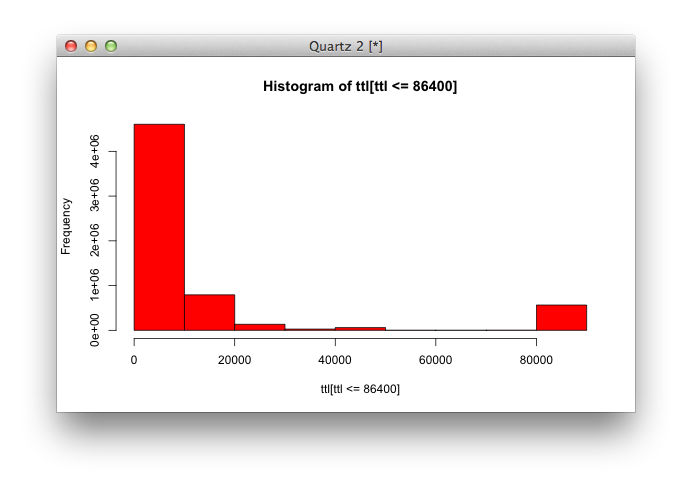

Let’s zoom in.

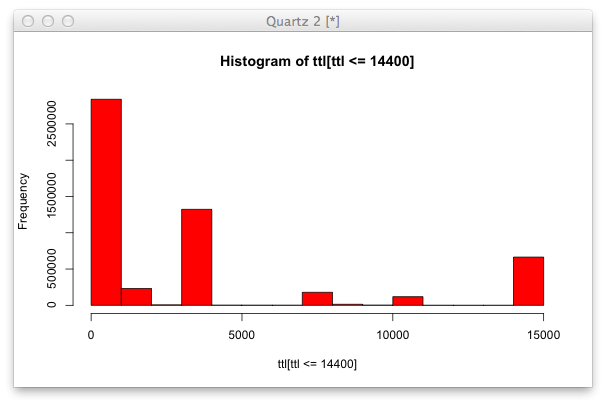

Ok, at this point, it’s probably reasonnable to keep zooming in:

or with 10 segments:

This is still a very unbalanced distribution. 4 hours TTLs are common, 1 hour TTLs are more common, but the vast majority seems to be below 15 minutes.

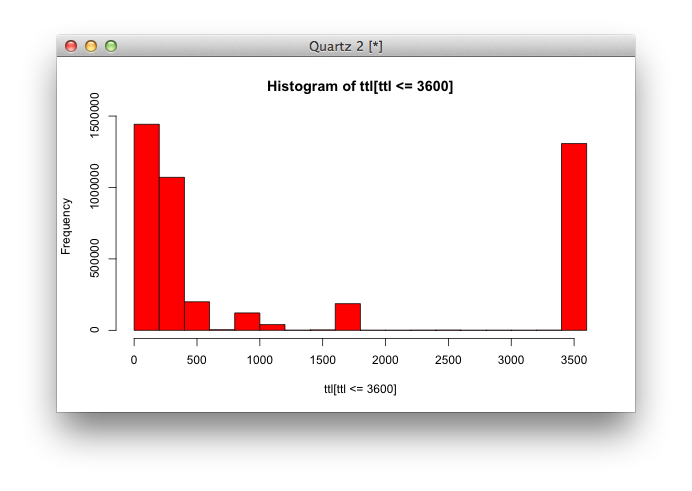

TTLs below 1 hour represent the hot spot, so let’s zoom in:

There’s a fair amount of records with a 1 hour TTL, a high amount of records with a TTL below or equal to 10 minutes, and pretty much nothing in-between.

Let’s see what TTLs below 10 minutes look like:

So, records within this interval are either below 5 minutes (with peaks at 1 minute and 2 minutes), or 10 minutes.

In summary:

| TTL = 0 | 0.16 % |

|---|---|

| TTL <= 1 minute | 9.84 % |

| TTL <= 2 minutes | 16.32 % |

| TTL <= 5 minutes | 39.88 % |

| TTL <= 1 hour | 70.07 % |

| TTL <= 1 day | 98.89 % |

Looks like there are still ways to make the internet faster.